About

I am Hsin-Yuan Huang (黃信元, pronounced “Shin Yuan Huan”). I also go by Robert.

I am the CTO of Oratomic. I am currently on leave from being an Assistant Professor of Theoretical Physics at Caltech. I was previously a Staff Research Scientist at Google Quantum AI, a visiting faculty at Stanford Univeristy, and a visiting scientist at MIT Center for Theoretical Physics and Simons Institute for the Theory of Computing, UC Berkeley. I received my Ph.D. under the guidance of John Preskill and Thomas Vidick. My doctoral dissertation, titled Learning in the Quantum Universe, was honored with the Milton and Francis Clauser Doctoral Prize, an award conferred annually to a single doctoral dissertation across all disciplines at Caltech that demonstrates the highest degree of originality and potential for opening up new avenues of human thought and endeavor.

Research Interest

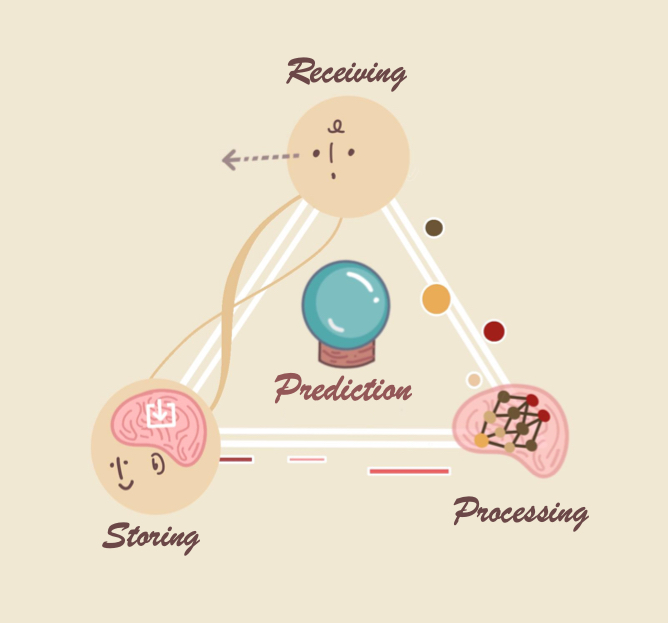

My research aims to build a rigorous foundation for understanding how scientists, machines, and future quantum computers can learn and discover new phenomena governing our quantum-mechanical universe (molecules, materials, pharmaceutics, exotic quantum matter, engineered quantum devices, etc.).

I leverage quantum information theory, quantum many-body physics, learning theory, and complexity theory to formalize and explore new questions in the following directions:

- How to accelerate/automate the development of quantum and physical sciences?

- When can quantum machines learn and predict better than classical machines?

- What physical phenomena can classical vs quantum machines learn and discover?

My ultimate goal is to build quantum machines capable of discovering new facets of our universe beyond the capabilities of humans and classical machines.

Teaching

Fall 2025: Caltech Ph 220 Quantum Learning Theory

Students

- Aditya Bhardwaj

- Xinyu Liu (with John Preskill)

- Nadine Meister (with Manuel Endres)

- Haimeng Zhao (with John Preskill)

- Muzhou (Richard) Ma (with John Preskill)

- Zachary Mann (with John Preskill)

- Zhihan Zhang

Publications

Google Scholar provides a full list under chronological/citations order.

Exponential quantum advantage in processing massive classical data

H. Zhao, A. Zlokapa, H. Neven, R. Babbush, J. Preskill, J. R. McClean, H.-Y. Huang.

arXiv (2026). [PDF] [New Scientist]Shor’s algorithm is possible with as few as 10,000 reconfigurable atomic qubits

M. Cain, Q. Xu, R. King, L. R. B. Picard, H. Levine, M. Endres, J. Preskill, H.-Y. Huang, D. Bluvstein.

arXiv (2026). [PDF] [Caltech News] [Quanta Magazine] [Nature News] [Science News] [Ars Technica] [Time Magazine]Strong random unitaries and fast scrambling

T. Schuster, F. Ma, A. Lombardi, F. Brandao, H.-Y. Huang.

Plenary talk at QIP (2026). [PDF]Random unitaries that conserve energy

L. Mao, L. Cui, T. Schuster, H.-Y. Huang.

Contributed talk at QIP (2026). [PDF]Random unitaries from Hamiltonian dynamics

L. Cui, T. Schuster, L. Mao, H.-Y. Huang, F Brandao.

Contributed talk at QIP (2026). [PDF]Hardness of recognizing phases of matter

T. Schuster, D. Kufel, N. Yao, H.-Y. Huang.

Contributed talk at QIP (2026). [PDF]Quantum Computing Enhanced Sensing

R. R. Allen, F. Machado, I. L. Chuang, H.-Y. Huang, S. Choi.

Contributed talk at QIP (2026). [PDF]Quantum Probe Tomography

S. Chen, J. Cotler, H.-Y. Huang.

arXiv (2025). [PDF]Quantum learning advantage on a scalable photonic platform

Z.-H. Liu, R. Brunel, E. E. B. Østergaard, O. Cordero, S. Chen, Y. Wong, J. A. H. Nielsen, A. B. Bregnsbo, S. Zhou, H.-Y. Huang, C. Oh, L. Jiang, J. Preskill, J. S. Neergaard-Nielsen, U. L. Andersen.

Science (2025). [PDF] [UChicago News] [TU Denmark News] [Perimeter Institute News]Generative quantum advantage for classical and quantum problems

H.-Y. Huang, M. Broughton, N. Eassa, H. Neven, R. Babbush, J. R. McClean.

arXiv (2025). [PDF]Certifying almost all quantum states with few single-qubit measurements

(alphabetical order) H.-Y. Huang, J. Preskill, M. Soleimanifar.

Nature Physics (2025), FOCS (2024), Contributed talk at QIP (2024).

[PDF] [PDF for Nature Physics] [Thread on X] [GitHub Code] [Invited talk at IBM] [Phys.org News]The vast world of quantum advantage

H.-Y. Huang, S. Choi, J. R. McClean, J. Preskill.

arXiv (2025). [PDF]Unitary designs in nearly optimal depth

L. Cui, T. Schuster, F. Brandao, H.-Y. Huang.

arXiv (2025). [PDF]Random unitaries in extremely low depth

T. Schuster, J. Haferkamp, H.-Y. Huang.

Science (2025), Long plenary talk at QIP (2025).

[PDF] [Thread on X] [Science Perspective] [New Scientist] [Phys.org News] [Caltech Story]Local minima in quantum systems

(alphabetical order) C.-F. Chen, H.-Y. Huang, J. Preskill, L. Zhou.

Nature Physics (2025), STOC (2024), Contributed talk at QIP (2024).

[PDF] [Thread on X] [Quanta Magazine] [Caltech Story]How to construct random unitaries

F. Ma, H.-Y. Huang.

STOC (2025), Short plenary talk at QIP (2025).

Invited to SIAM Journal of Computing Special Issue.

[PDF] [Quanta Magazine]Classically estimating observables of noiseless quantum circuits

A. Angrisani, A. Schmidhuber, M. S. Rudolph, M. Cerezo, Z. Holmes, H.-Y. Huang.

Physical Review Letters (2025)[PDF]Myths around quantum computation before full fault tolerance: What no-go theorems rule out and what they don’t

Z. Zimborás, B. Koczor, Z. Holmes, E.-M. Borrelli, A. Gilyén, H.-Y. Huang, Z. Cai, A. Acín, L. Aolita, L. Banchi, F. G. S. L. Brandão, D. Cavalcanti, T. Cubitt, S. N. Filippov, G. García-Pérez, J. Goold, O. Kálmán, E. Kyoseva, M. A.C. Rossi, B. Sokolov, I. Tavernelli, S. Maniscalco.

arXiv (2025). [PDF]Predicting quantum channels over general product distributions

(alphabetical order) S. Chen, J. D. Pont, J.-T. Hsieh, H.-Y. Huang, J. Lange, J. Li.

COLT (2025). [PDF]Learning shallow quantum circuits with many-qubit gates

F. Vasconcelos, H.-Y. Huang.

COLT (2025). [PDF]Quantum error correction below the surface code threshold

(alphabetical order) Google Quantum AI and Collaborators.

Nature (2024). [PDF]

[Quanta Magazine] [Physics News] [Nature News] [New York Times]Entanglement-enabled advantage for learning a bosonic random displacement channel

C. Oh, S. Chen, Y. Wong, S. Zhou, H.-Y. Huang, J. A.H. Nielsen, Z.-H. Liu, J. S. Neergaard-Nielsen, U. L. Andersen, L. Jiang, J. Preskill.

Physical Review Letters (2024) [PDF]Predicting adaptively chosen observables in quantum systems

J. Huang, L. Lewis, H.-Y. Huang, J. Preskill.

arXiv (2024). [PDF]Tight bounds on Pauli channel learning without entanglement

S. Chen, C. Oh, S. Zhou, H.-Y. Huang, L. Jiang.

Physical Review Letters (2024). [PDF]Learning shallow quantum circuits

H.-Y. Huang$\dagger$ (co-first author), Y. Liu$\dagger$, M. Broughton, I. Kim, A. Anshu, Z. Landau, J. R. McClean.

STOC (2024), Short plenary talk at QIP (2024).

Invited to SIAM Journal of Computing Special Issue.

[PDF] [QIP Slide] [Thread on X] [PennyLane Demo]Learning quantum states and unitaries of bounded gate complexity

H. Zhao, L. Lewis, I. Kannan, Y. Quek, H.-Y. Huang, M. C. Caro.

PRX Quantum (2024) [PDF]Learning conservation laws in unknown quantum dynamics

Y. Zhan, A. Elben, H.-Y. Huang, Y. Tong.

PRX Quantum (2024). [PDF]Improved machine learning algorithm for predicting ground state properties

L. Lewis, H.-Y. Huang, V. T. Tran, S. Lehner, R. Kueng, J. Preskill.

Nature Communications (2024), Contributed talk at QIP (2023).

[PDF] [GitHub Code] [Thread on X] [Quanta Magazine]Dynamical simulation via quantum machine learning with provable generalization

J. Gibbs, Z. Holmes, M. C. Caro, N. Ezzell, H.-Y. Huang, L. Cincio, A. T. Sornborger, P. J. Coles. Physical Review Research (2024). [PDF]On quantum backpropagation, information reuse, and cheating measurement collapse

A. Abbas, R. King, H.-Y. Huang, W. J Huggins, R. Movassagh, D. Gilboa, J. R McClean NeurIPS 2023 Spotlight. [PDF]Learning to predict arbitrary quantum processes

H.-Y. Huang, S. Chen, J. Preskill.

PRX Quantum (2023), Contributed talk at QIP (2023).

[PDF] [GitHub Code] [Thread on X]The complexity of NISQ

(alphabetical order) S. Chen, J. Cotler, H.-Y. Huang, J. Li.

Nature Communications (2023), Contributed talk at QIP (2023). [PDF]Out-of-distribution generalization for learning quantum dynamics

M. C. Caro$\dagger$, H.-Y. Huang$\dagger$ (co-first author), N. Ezzell, J. Gibbs, A. T. Sornborger, L. Cincio, P. J. Coles, Z. Holmes.

Nature Communications (2023). [PDF] [News]Learning many-body Hamiltonians with Heisenberg-limited scaling

H.-Y. Huang$\dagger$ (co-first author), Y. Tong$\dagger$, Di Fang, Yuan Su.

Physical Review Letters (2023), Short plenary talk at QIP (2023). [PDF] [Thread on X]The power and limitations of learning quantum dynamics incoherently

S. Jerbi, J. Gibbs, M. S. Rudolph, M. C. Caro, P. J. Coles, H.-Y. Huang, Z. Holmes.

arXiv (2023). [PDF]Preparing random states and benchmarking with many-body quantum chaos

J. Choi, A. L. Shaw, I. S. Madjarov, X. Xie, R. Finkelstein, J. P. Covey, J. S. Cotler, D. K. Mark, H.-Y. Huang, A. Kale, H. Pichler, F. G. S. L. Brandao, S. Choi, M. Endres.

Nature (2023). [PDF]Emergent quantum state designs from individual many-body wavefunctions

J. Cotler$\dagger$, D. Mark$\dagger$, H.-Y. Huang$\dagger$ (co-first author), F. Hernandez, J. Choi, A. L. Shaw, M. Endres, S. Choi.

PRX Quantum (2023). [PDF]The randomized measurement toolbox

(alphabetical order) A. Elben, S. Flammia, H.-Y. Huang, R. Kueng, J. Preskill, B. Vermersch, P. Zoller.

Nature Review Physics (2022). [PDF] [Tutorial at QIP 2022]Hardware-efficient learning of quantum many-body states

K. V. Kirk, J. Cotler, H.-Y. Huang, M. D. Lukin.

arXiv (2022). [PDF]Provably efficient machine learning for quantum many-body problems

H.-Y. Huang, R. Kueng, G. Torlai, V. V. Albert, J. Preskill.

Science (2022), Long plenary talk at QIP (2022).

[PDF] [GitHub Code] [Thread on X] [Caltech News] [Phys.org News] [IEEE Spectrum]

[PennyLane Tutorial] [Invited talk at Simons Institute] [Quanta Magazine]Challenges and opportunities in quantum machine learning

M. Cerezo, G. Verdon, H.Y. Huang, L. Cincio, P. Coles.

Nature Computational Science (2022). [PDF]Generalization in quantum machine learning from few training data

M. C. Caro, H.-Y. Huang, M. Cerezo, K. Sharma, A. Sornborger, L. Cincio, P. J. Coles.

Nature Communications (2022).

[PDF] [Los Alamos News] [PennyLane Tutorial]Quantum advantage in learning from experiments

H.-Y. Huang, M. Broughton, J. Cotler, S. Chen, J. Li, M. Mohseni, H. Neven, R. Babbush, R. Kueng, J. Preskill, J. R. McClean.

Science (2022).

[PDF] [Thread on X] [arsTECHNICA News] [ScienceNews] [Phys.org News] [WIRED News] [NewScientist News] [Google AI blog] [PennyLane Tutorial] [Invited talk at IBM] [Nature News]Foundations for learning from noisy quantum experiments

H.-Y. Huang, S. Flammia, J. Preskill.

Contributed talk at QIP (2022). [PDF]Learning quantum states from their classical shadows

H.-Y. Huang.

Nature Review Physics (2022). [PDF] [Quanta Magazine]Exponential separation between learning with and without quantum memory

(alphabetical order) S. Chen, J. Cotler, H.-Y. Huang, J. Li.

FOCS (2021), Contributed talk at QIP (2022),

Invited to SIAM Journal of Computing Special Issue. [PDF]Revisiting dequantization and quantum advantage in learning tasks

(alphabetical order) J. Cotler, H.-Y. Huang, J. R. McClean.

arXiv (2021). [PDF]A hierarchy for replica quantum advantage

(alphabetical order) S. Chen, J. Cotler, H.-Y. Huang, J. Li.

arXiv (2021). [PDF]What the foundations of quantum computer science teach us about chemistry.

J. R. McClean, N. C. Rubin, J. Lee, M. P. Harrigan, T. E. O’Brien, R. Babbush, W. J. Huggins, H.-Y. Huang.

Journal of Chemical Physics (2021). [PDF] [Talk at Simons Institute]Efficient estimation of Pauli observables by derandomization

H.-Y. Huang, R. Kueng, J. Preskill.

Physical Review Letters (2021), TQC (2021). [PDF] [GitHub Code]Power of data in quantum machine learning

H.-Y. Huang, M. Broughton, M. Mohseni, R. Babbush, S. Boixo, H. Neven, J. R. McClean.

Nature Communications (Featured), Contributed talk at QIP (2021).

[PDF] [Talk at QIP] [Google AI blog] [TensorFlow blog] [TensorFlow Quantum Tutorial]Information-theoretic bounds on quantum advantage in machine learning

H.-Y. Huang, R. Kueng, J. Preskill.

Physical Review Letters (Editor’s Suggestion), Contributed talk at QIP (2021).

[PDF] [Talk at QIP] [IQIM blog] [Quanta Magazine]Nearly-tight Trotterization of interacting electrons

Y. Su, H.-Y. Huang, E. Campbell.

Quantum (2021), Contributed talk at QIP (2021). [PDF] [Talk at QIP]Concentration for random product formulas

C.-F. Chen$\dagger$, H.-Y. Huang$\dagger$ (co-first author), R. Kueng, J. Tropp.

PRX Quantum (2021), TQC (2021). [PDF] [Talk at TQC]Near-term quantum algorithms for linear systems of equations

H.-Y. Huang, K. Bharti, P. Rebentrost.

New Journal of Physics (2021). [PDF]TensorFlow Quantum: A Software Framework for Quantum Machine Learning

M. Broughton, G. Verdon, T. McCourt, A. J. Martinez, J. H. Yoo, S. V. Isakov, P. Massey, R. Halavati, M. Y. Niu, A. Zlokapa, E. Peters, O. Lockwood, A. Skolik, S. Jerbi, V. Dunjko, M. Leib, M. Streif, D. V. Dollen, H. Chen, S. Cao, R. Wiersema, H.-Y. Huang, J. R. McClean, R. Babbush, S. Boixo, D. Bacon, A. K. Ho, H. Neven, M. Mohseni.

arXiv (2020). [PDF] [TFQ Website]Mixed-state entanglement from local randomized measurements

A. Elben, R. Kueng, H.-Y. Huang, R. van Bijnen, C. Kokail, M. Dalmonte, P. Calabrese, B. Kraus, J. Preskill, P. Zoller, B. Vermersch.

Physical Review Letters (2020). [PDF]Predicting many properties in a quantum system from very few measurements

H.-Y. Huang, R. Kueng, J. Preskill.

Nature Physics (2020), Short plenary talk at QIP (2020).

[PDF] [GitHub Code] [Wikipedia page] [PennyLane Tutorial] [Talk by John Preskill] [Phys.org News] [Quanta Magazine]FlowQA: grasping flow in history for conversational machine comprehension

H.-Y. Huang, E. Choi, W. Yih.

ICLR (2019). [PDF]FusionNet: Fusing via Fully-aware attention with application to machine comprehension

H.-Y. Huang, C. Zhu, Y. Shen, W. Chen.

ICLR (2018). [PDF]A unified algorithm for one-class structured matrix factorization with side information

H.-F. Yu, H.-Y. Huang, I. S. Dhillon, C.-J. Lin.

AAAI (2017). [PDF]Linear and kernel classification: When to use which?

H.-Y. Huang, C.-J. Lin.

SDM (2016). [PDF]Dissecting the human protein-protein interaction network via phylogenetic decomposition

C.-Y. Chen, A. Ho, H.-Y. Huang, H.-F. Juan and H.-C. Huang.

Scientific Reports (2014).